12 Best Audio to Text Converter Tools of 2026 (Ranked)

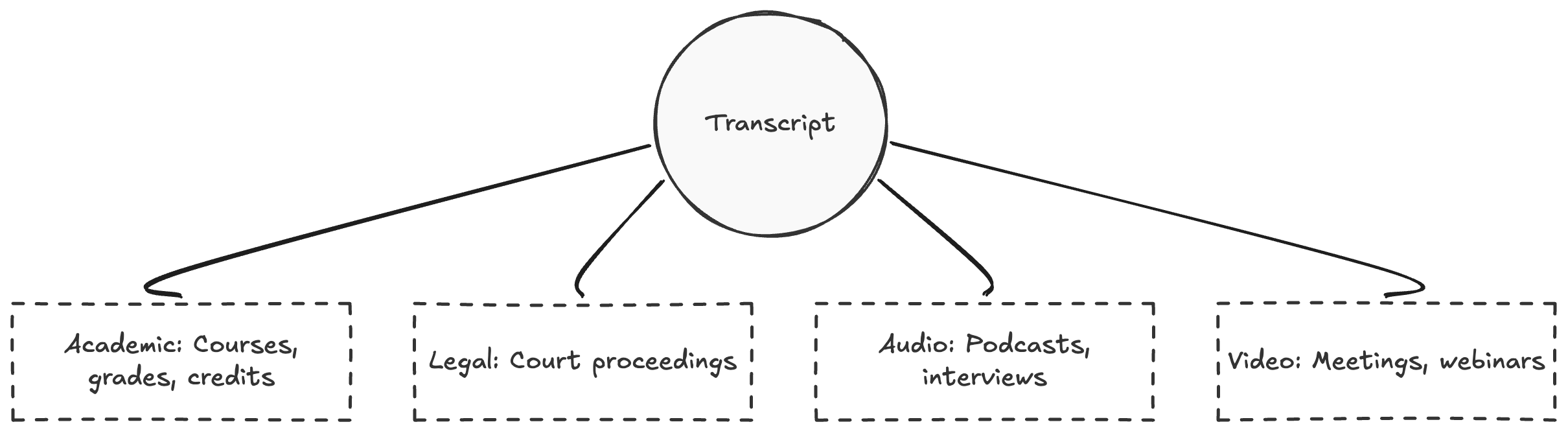

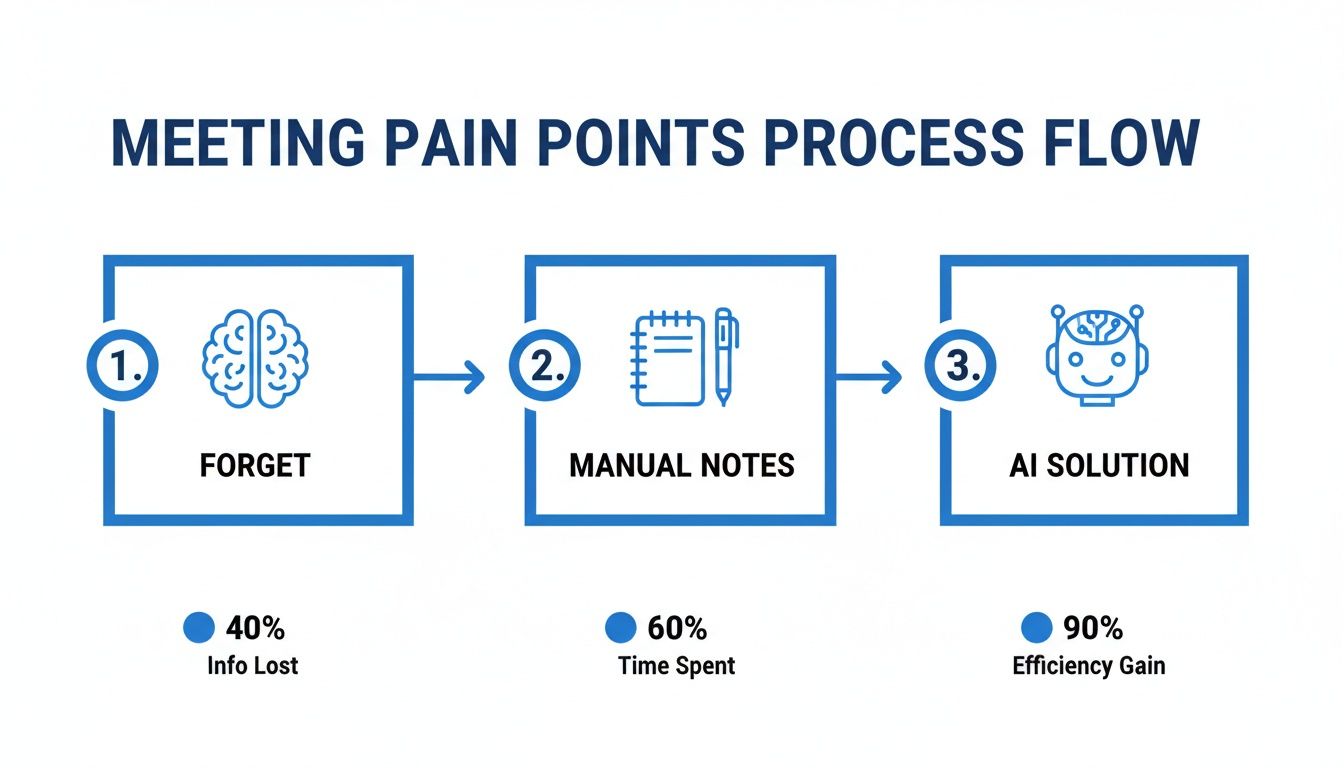

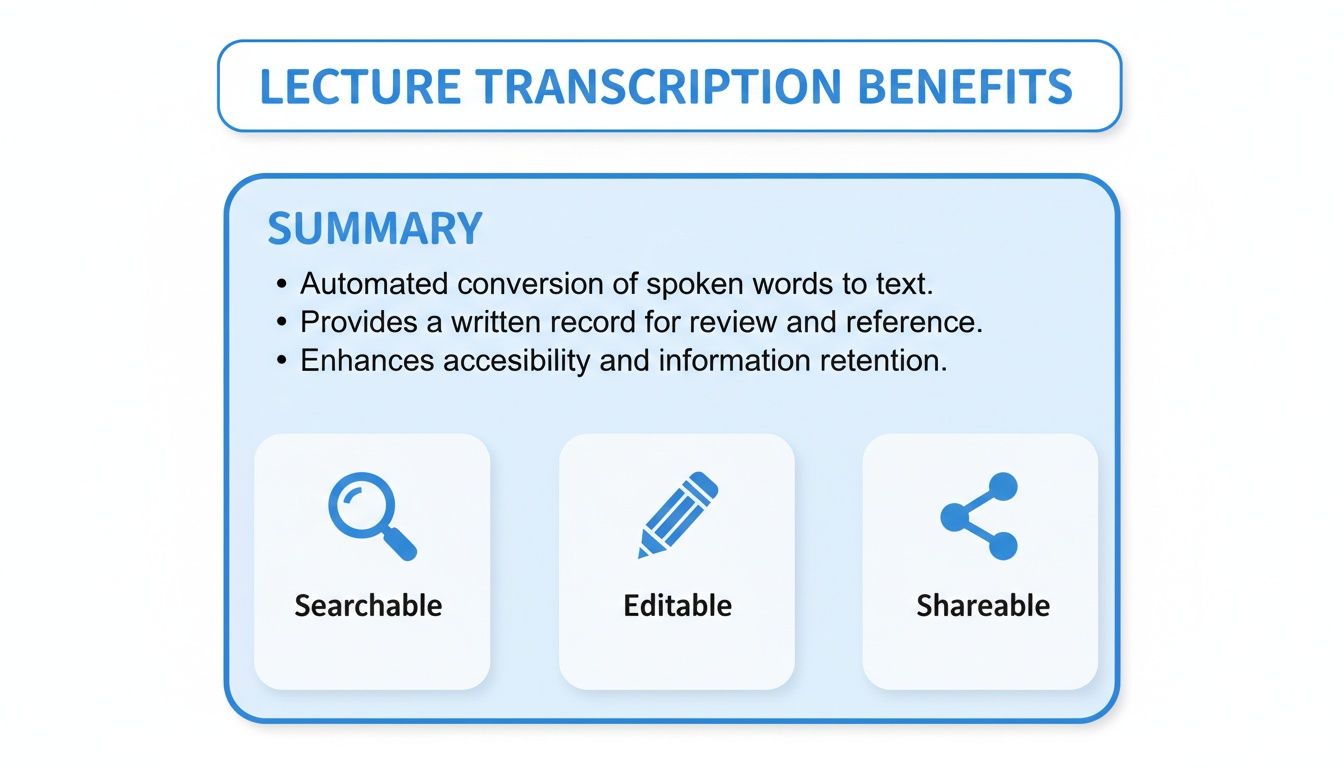

Finding the **best audio to text converter** can feel like searching for a needle in a haystack. You need a tool that doesn't just convert speech, but does it accurately, quickly, and with features that actually match your workflow. Dealing with inaccurate transcripts, slow processing, or a confusing editor wastes time you simply don't have. Whether you're a student transcribing lectures, a podcaster creating show notes, or a business team documenting meetings, the wrong tool is more of a hindrance than a help.

This guide cuts through the noise. We've tested and ranked the top 12 platforms for 2026 to help you find the perfect fit for your specific needs. Instead of just listing features, we provide a deep, practical analysis of what makes each tool stand out and where it falls short. Each review includes real-world screenshots, direct links to the platform, and a clear breakdown of its pros and cons.

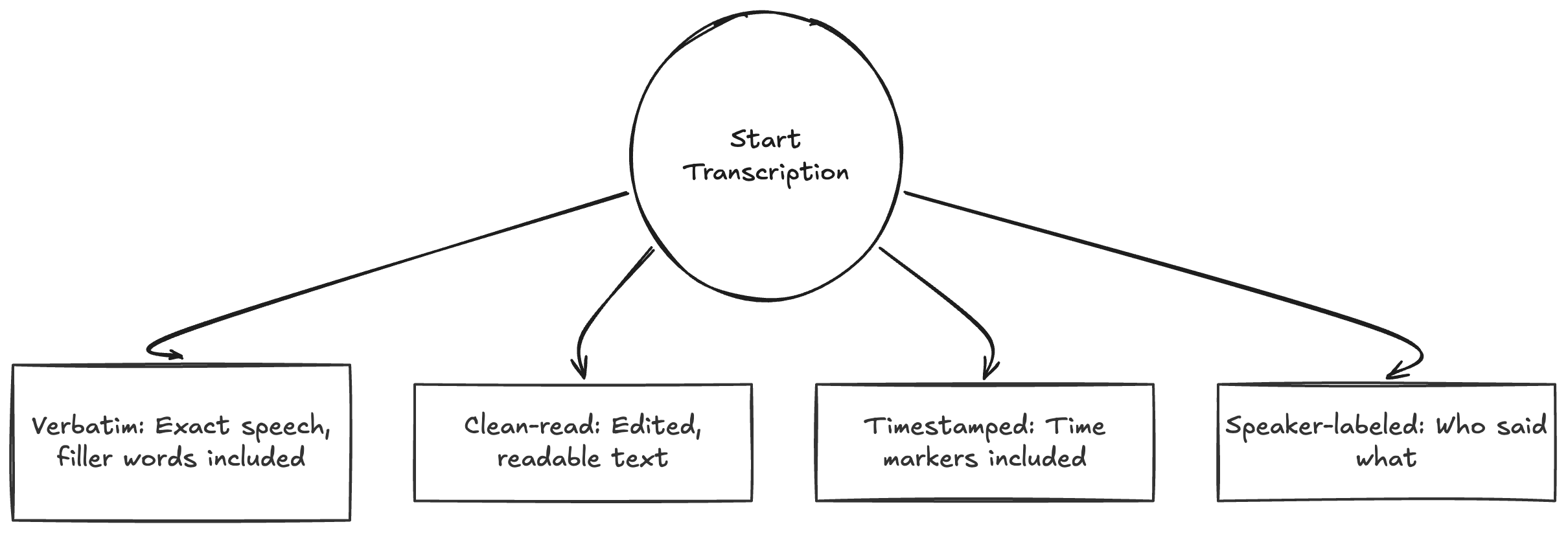

We evaluated each converter on a core set of criteria that truly matters for day-to-day use:

- **Transcription Accuracy:** How well does it handle different accents, background noise, and technical jargon?

- **Speed & Turnaround Time:** How quickly can you get a usable transcript?

- **Editor & Usability:** Is the interface intuitive for correcting errors and formatting the text?

- **Specialized Features:** Does it offer speaker labeling, timestamping, or custom vocabulary?

When evaluating the capabilities of the best audio to text converters for 2026, we considered advanced features like the ability to [auto generate chapters on YouTube](https://timeskip.io/blog/how-do-i-auto-generate-chapters-on-you-tube), which greatly enhances content navigation. This list will show you which tools deliver on their promises, saving you from the frustration of trial and error.

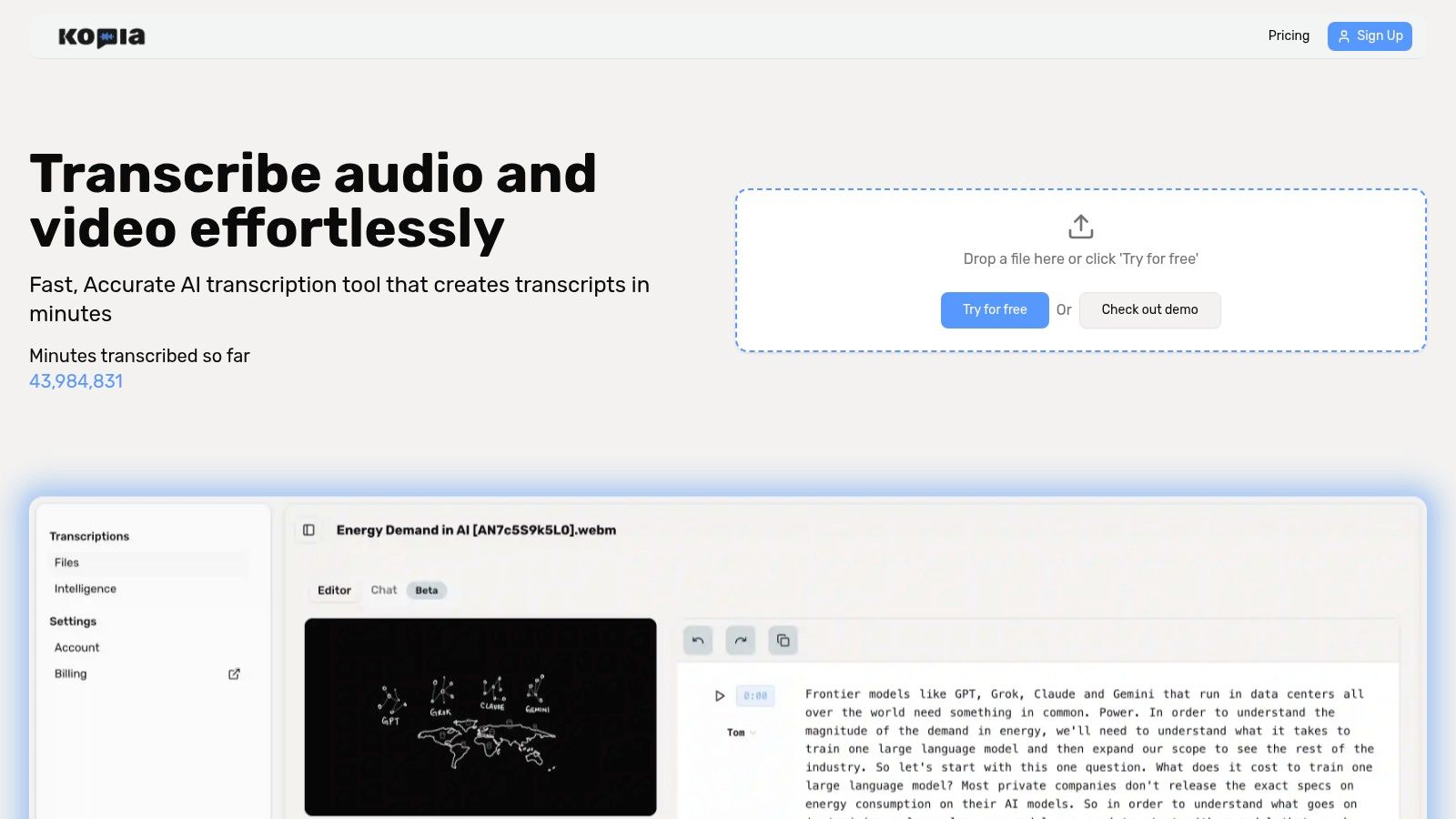

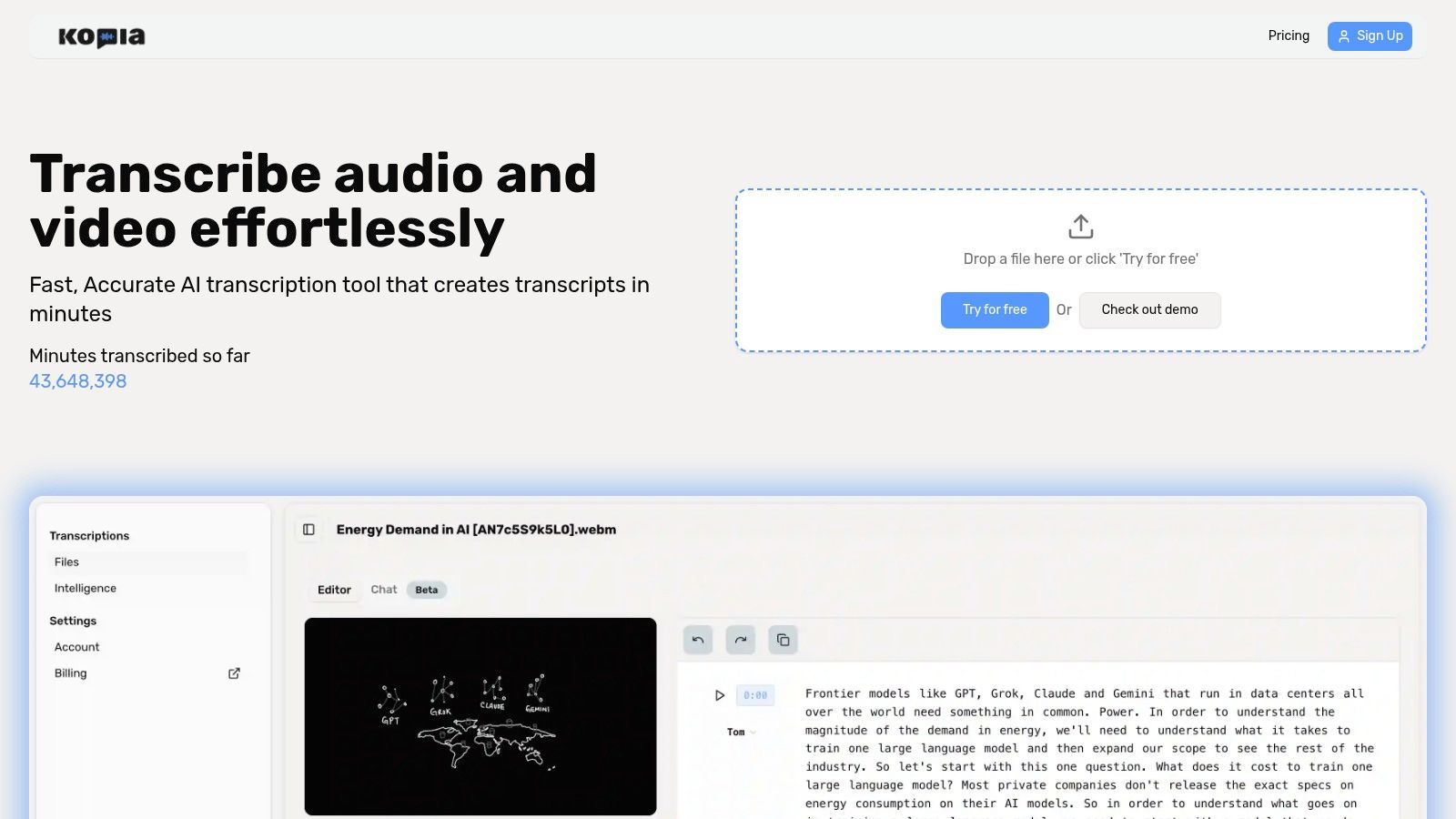

## 1. Kopia.ai

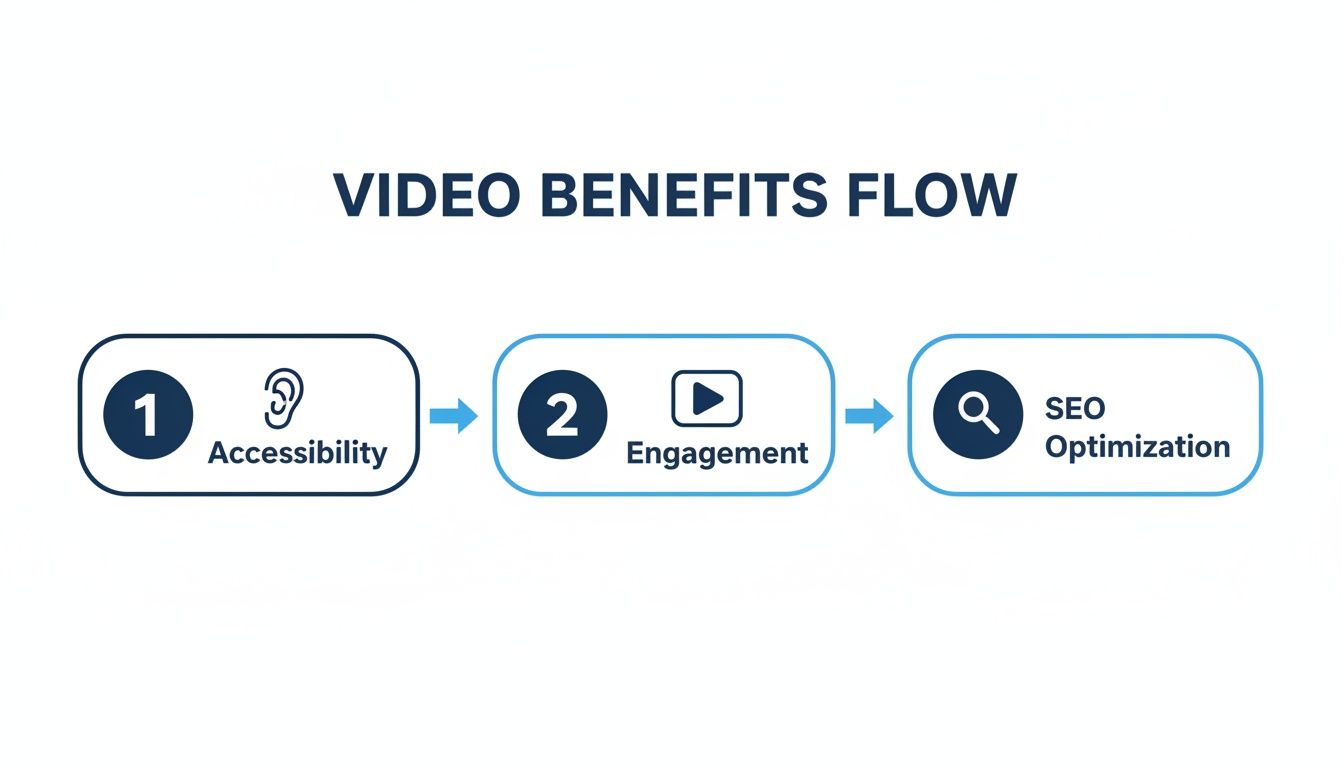

Kopia.ai earns its top spot as the best audio to text converter by combining exceptional accuracy with a suite of AI-powered workflow tools that accelerate content creation. It moves beyond simple transcription, offering a complete platform for turning raw audio and video into polished, publishable assets. Its core strength lies in its ability to quickly generate searchable, editable text from media files in over 80 languages, making it a powerful solution for creators with a global audience.

The platform is designed for efficiency. For podcasters, YouTubers, and researchers, the synchronized, in-browser editor is a standout feature. Clicking any word in the transcript instantly jumps the media player to that exact moment, which makes correcting errors precise and fast. This tight integration of text and audio saves considerable time compared to cross-referencing timestamps in separate applications.

### Key Strengths and Features

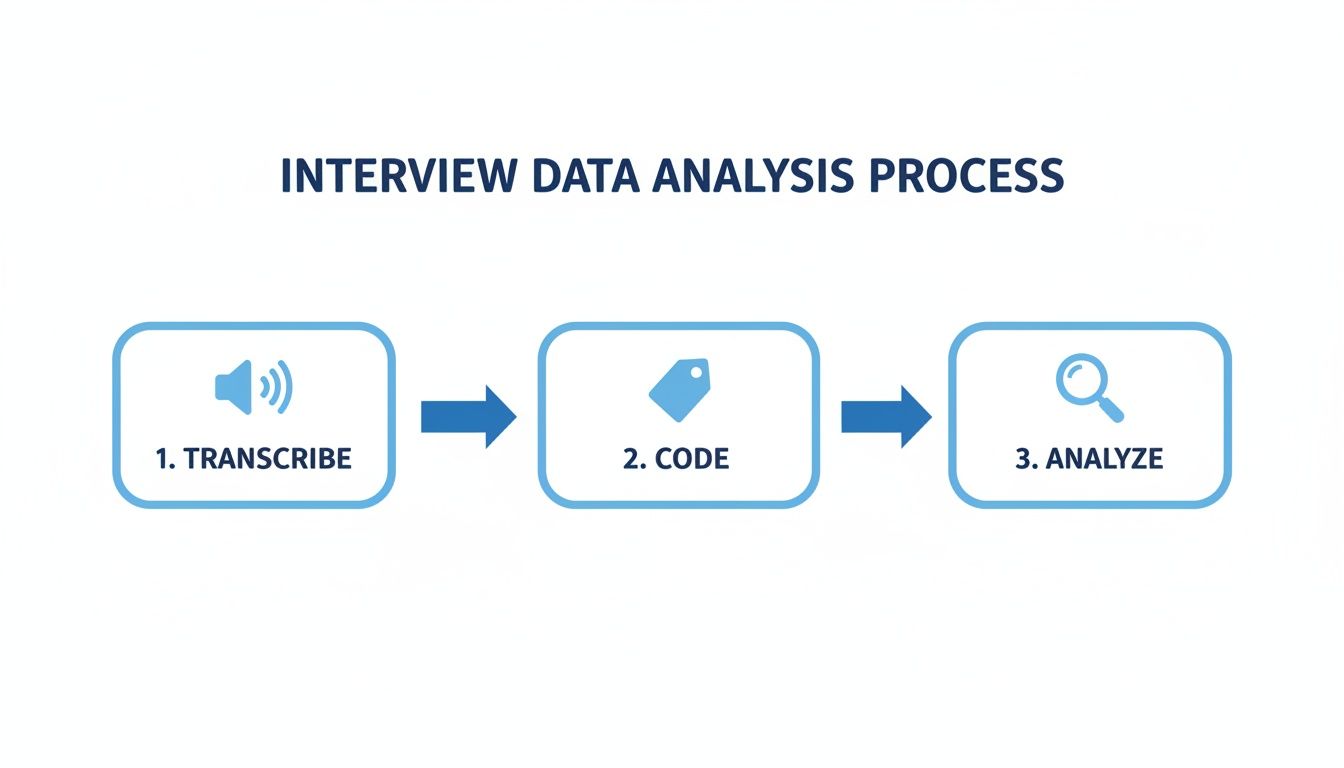

What sets Kopia.ai apart are the integrated AI analysis tools that help you work with your transcript. The "talk to your transcript" feature allows you to ask questions, generate summaries, create chapter markers, and detect key topics directly from the text. This is especially useful for pulling key insights from long interviews, creating show notes for a podcast, or summarizing a lengthy business meeting.

- **Multilingual Support:** Transcribe in 80+ languages and translate into 130+ languages with a single click, ideal for international content distribution.

- **Synchronized Editor:** A word-level, interactive editor makes finding and fixing transcription errors straightforward and quick.

- **AI Content Tools:** Generate summaries, chapters, and topic lists directly from your transcript to speed up editing and publishing.

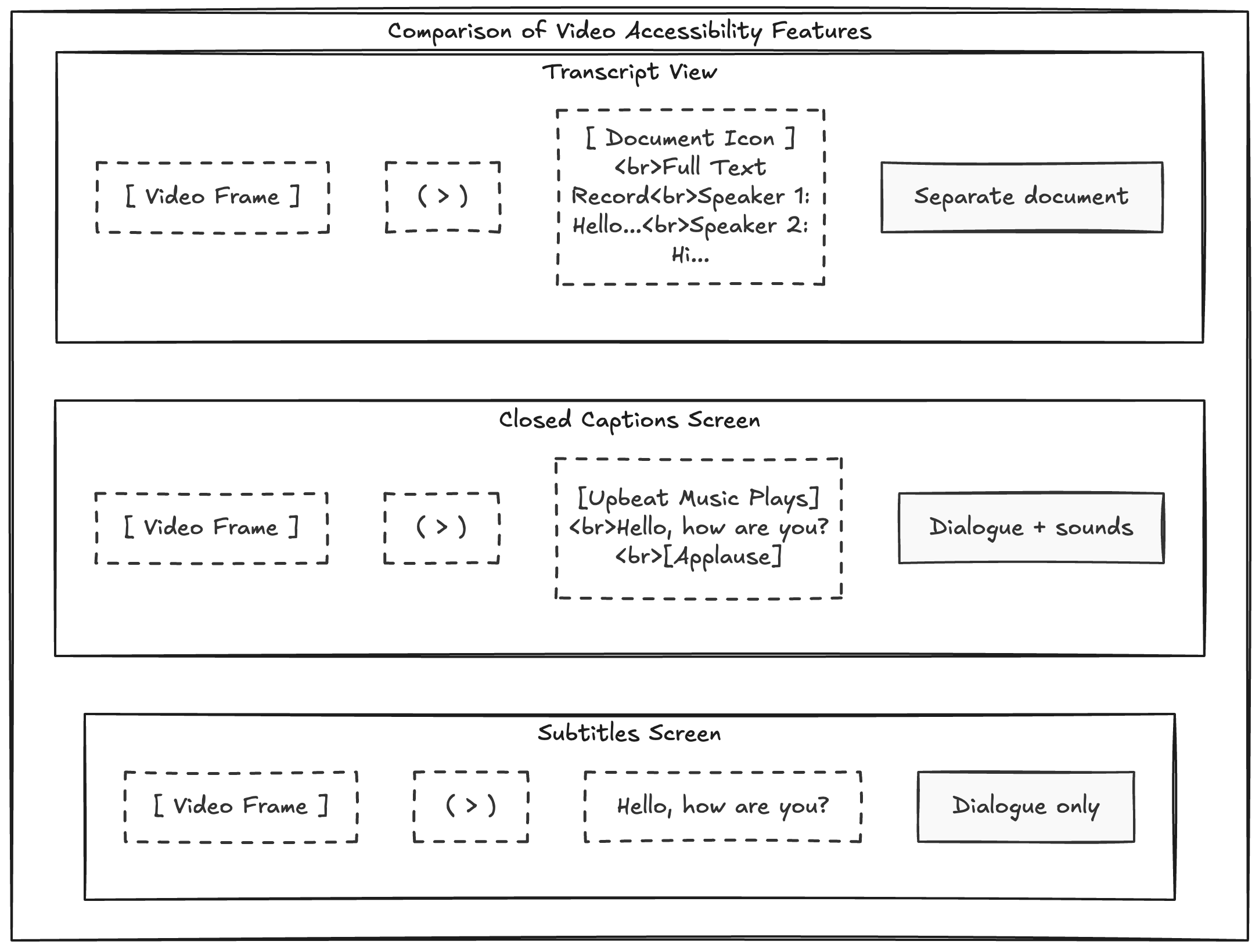

- **Advanced Export Options:** Get your transcript as a text file, SRT/VTT for subtitles, or even burn captions directly into your video for maximum accessibility.

### Practical Use and Considerations

Kopia.ai is a versatile tool for students transcribing lectures, business teams documenting meetings, and creators producing accessible video content. Its flexible plans (Starter, Pro, and Business) are structured to accommodate everyone from individual users to high-volume teams.

While the "millions of minutes transcribed" provides confidence in its reliability, detailed pricing information requires visiting the website. For organizations in highly regulated fields, it’s worth noting that information on enterprise-grade security certifications was not readily available in the provided materials.

**Pros:**

- Fast, accurate transcription with broad language support.

- Interactive editor synced with media for precise corrections.

- Built-in AI tools for summarization and chapter creation.

- Excellent subtitle and captioning features.

**Cons:**

- Specific pricing and plan limits are not detailed upfront.

- Lacks explicit mention of enterprise compliance or on-premise options.

**Website:** [https://kopia.ai](https://kopia.ai)

## 2. Otter.ai

Otter.ai is purpose-built to act as an AI meeting assistant, making it a top choice for professionals, students, and teams who need more than just a basic transcript. It shines in live environments by connecting directly to your calendar and automatically joining Zoom, Google Meet, or Microsoft Teams calls to record and transcribe in real time. This function makes it an excellent audio to text converter for anyone tired of manually taking notes.

The platform excels at turning messy conversations into organized, actionable assets. While you’re in a meeting, you can highlight key points, add comments, and assign action items directly within the live transcript. After the meeting, Otter’s AI generates a concise summary, outlines key topics, and lists all assigned action items, saving significant review time. Its user interface is clean and centered around collaboration, making it easy to share and search through meeting notes with your team.

### Key Features & Use Case

Otter.ai is best for anyone who needs to document and collaborate on live discussions. Its strength is not just transcription but the entire meeting workflow.

- **Best For:** Business teams, students, and educators who need detailed, searchable meeting notes and automated summaries.

- **Real-Time Transcription:** The "OtterPilot" joins your meetings to provide a live, collaborative transcript.

- **AI Meeting Summary:** Automatically generates a 30-second summary, identifies action items, and creates an outline of the discussion.

- **Speaker Identification:** Does a solid job of labeling different speakers, which is crucial for understanding meeting dynamics.

- **Integrations:** Connects with calendars and major video conferencing tools, streamlining the entire recording process.

### Pricing & Limitations

Otter offers a tiered pricing model, including a free plan with limitations.

- **Free Plan:** Includes 300 monthly transcription minutes (30 minutes per conversation) and limited file imports.

- **Pro Plan:** Starts at $10 per user/month (billed annually) for more minutes and features.

- **Business Plan:** $20 per user/month (billed annually) for team features and admin controls.

The primary limitation is its focus on English with specific accents (US and UK), making it less suitable for multilingual needs. Accuracy also depends heavily on clear audio without significant background noise.

**Website:** [https://otter.ai](https://otter.ai)

## 3. Rev

Rev offers a unique hybrid approach, combining a fast AI-powered transcription service with an on-demand network of human professionals. This makes it an ideal audio to text converter for users who need a quick draft but also require the option for near-perfect accuracy on critical files. Its platform is well-suited for professional content creators, researchers, and legal experts who can’t afford mistakes in their final transcripts.

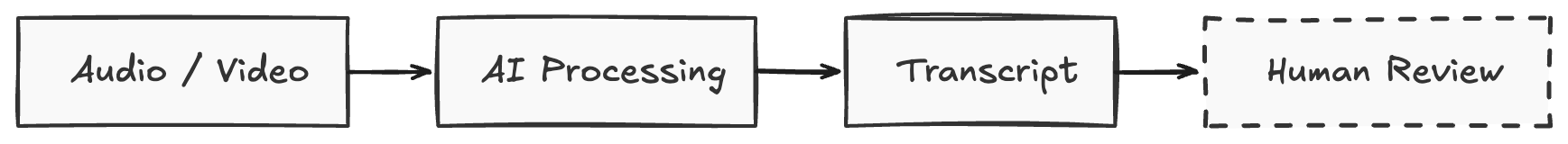

The primary advantage of Rev is its clear upgrade path. You can start with an automated transcript generated in minutes for a low cost, then, if needed, send that same file to a human transcriptionist for a 99% accuracy guarantee. The platform also includes a robust online editor for collaboration and making corrections, along with services for captions and global subtitles. For those needing a deeper dive into different options, understanding various audio to text transcription services can help clarify which model fits best.

### Key Features & Use Case

Rev’s dual AI and human model provides flexibility for a wide range of accuracy and budget requirements, from casual meeting notes to court-admissible evidence.

- **Best For:** Podcasters, journalists, legal professionals, and video producers who need high accuracy and may require human verification.

- **Hybrid Model:** Choose between fast AI transcription (around 90% accuracy) or human transcription (99% accuracy).

- **Time-Coded Transcripts:** All transcripts, AI or human, include speaker labels and timestamps, making them easy to edit and sync with audio or video.

- **Captions and Subtitles:** Offers services for creating video captions and foreign language subtitles, managed through the same platform.

- **Collaboration Tools:** The interactive editor allows teams to review, edit, and share transcripts securely.

### Pricing & Limitations

Rev’s pricing is based on the service selected, often per audio minute, which can become costly for bulk needs.

- **AI Transcription:** Starts at $0.25 per minute.

- **Human Transcription:** Starts at $1.50 per minute with a 12-hour turnaround.

- **AI Captions:** $0.25 per minute.

The main drawback is cost, especially for human services, which can add up quickly compared to subscription-based AI-only platforms. The turnaround time for human transcription, while fast, is not instant, making it less suitable for live transcription needs.

**Website:** [https://www.rev.com](https://www.rev.com)

## 4. Descript

Descript is a creator-focused tool that treats transcription as the foundation for media editing. Instead of just converting audio to text, it allows you to edit your audio or video files by simply editing the text document. This makes it an exceptional choice for podcasters, YouTubers, and educators who need to remove filler words, restructure sentences, or create clips without navigating a complex timeline editor. It turns the entire content creation process into something as simple as editing a Word document.

The platform is designed as an all-in-one production studio. Beyond transcription, it offers powerful AI features like "Studio Sound" to remove background noise and enhance voice quality, Overdub to create a realistic clone of your voice for correcting mistakes, and automatic subtitle generation. Its workflow is built for content creators who need to move from a raw recording to a polished final product quickly and efficiently, making it more than just a simple audio to text converter.

### Key Features & Use Case

Descript is ideal for content creators who need an integrated transcription and media editing workflow. Its text-based editing approach is a significant time-saver.

- **Best For:** Podcasters, video creators, and educators who need to edit audio/video content alongside transcribing it.

- **Text-Based Editing:** Edit your audio and video by cutting, pasting, or deleting words in the transcript.

- **AI Audio Enhancement:** Features like Studio Sound clean up recordings, while Overdub allows for AI-powered voice correction.

- **Filler Word Removal:** Automatically detects and removes words like "um," "uh," and other repeated words with a single click.

- **Collaboration:** A project-based workflow makes it easy for teams to collaborate on scripts and edits. Podcasters especially benefit from this, and you can learn more about [how to transcribe a podcast](https://kopia.ai/blog/how-to-transcribe-a-podcast-a-podcasters-guide) using these tools.

### Pricing & Limitations

Descript’s pricing is based on transcription hours and access to advanced features.

- **Free Plan:** Includes 1 hour of transcription and limited access to its features.

- **Creator Plan:** Starts at $12 per user/month (billed annually) for 10 hours of transcription.

- **Pro Plan:** $24 per user/month (billed annually) for 30 hours of transcription and more advanced AI features.

The main limitation is that it can be overkill for users who only need a basic transcript without any editing capabilities. The learning curve is also steeper than a simple transcription service due to its extensive feature set.

**Website:** [https://www.descript.com](https://www.descript.com)

## 5. Trint

Trint is a newsroom-grade audio to text converter designed for journalists, media houses, and content teams who need more than a simple transcript. Its core strength lies in its powerful browser-based editor, which combines an automated transcript with the original audio or video file. This setup allows users to verify and correct the text with ease, making it perfect for creating highly accurate, quote-ready content from interviews, press conferences, and recorded events.

The platform is built around editorial workflows and collaboration. Multiple users can work on a single transcript simultaneously, highlighting key quotes, leaving comments, and assigning sections. This makes Trint a strong choice for teams working against a deadline. Its focus on turning raw audio into a searchable, editable, and collaborative asset sets it apart for professional content creation where accuracy and speed are critical.

### Key Features & Use Case

Trint is engineered for professionals in media and research who need to find and share important moments from audio and video fast. The collaborative editor is its standout feature.

- **Best For:** Journalists, content marketers, researchers, and production teams needing verifiable transcripts and collaborative editing tools.

- **Time-Coded Editor:** The interactive editor links every word to the original media, allowing for quick verification and precise edits.

- **Live Transcription:** Captures audio in real-time, making it useful for live events, breaking news, or instant meeting documentation.

- **Multilingual Support:** Transcribes accurately in over 40 languages, catering to global teams and international content.

- **Collaboration Tools:** Allows teams to highlight, comment, and edit transcripts together, streamlining the post-production and fact-checking process.

### Pricing & Limitations

Trint’s pricing is geared toward professional and team use, with plans structured around features and user count.

- **Starter Plan:** Begins at $60 per user/month for individuals transcribing up to 7 files monthly.

- **Advanced Plan:** $75 per user/month for unlimited transcriptions and more collaboration features.

- **Enterprise Plan:** Custom pricing for larger teams needing advanced security and workflow integrations.

The main limitation is its price point, which is higher than many other converters, making it less accessible for casual users or students. The trial also has strict limits on file duration and count.

**Website:** [https://trint.com](https://trint.com)

## 6. Sonix

Sonix is a fast and reliable audio to text converter designed for professionals who need high-quality transcripts, translations, and subtitles. Its major advantage is its powerful in-browser editor, which allows users to easily polish AI-generated text. The platform synchronizes audio playback with the text, highlighting words as they are spoken, which makes correcting errors simple and intuitive. This feature is particularly useful for journalists, podcasters, and video editors who require word-for-word accuracy and precise timing.

The service stands out with its robust support for subtitling and captioning. Users can export transcripts in various subtitle formats like SRT and VTT, adjust character-per-line limits, and even burn captions directly into a video file. Sonix also offers automated translation into over 40 languages, making it an excellent choice for creators looking to expand their content's reach to a global audience. Its combination of speed, an interactive editor, and strong multimedia features makes it a top contender for content production workflows.

### Key Features & Use Case

Sonix is ideal for media professionals and organizations that need more than just a basic transcript and require tools for editing, translating, and creating subtitles.

- **Best For:** Podcasters, video creators, journalists, and researchers needing a polished transcript with precise timestamps and subtitle outputs.

- **Pay-As-You-Go Transcription:** Offers a flexible pricing model based on the duration of the audio or video you need to transcribe.

- **Advanced Web Editor:** Provides word-level timing, speaker labeling, and a suite of tools to review and refine the transcript.

- **Subtitle and Caption Support:** Exports to popular formats (SRT, VTT) and offers subtitle burn-in capabilities for video.

- **Team Collaboration:** Features like team workspaces and custom dictionaries make it suitable for organizational use.

### Pricing & Limitations

Sonix uses a usage-based model that combines a subscription fee with per-hour rates for transcription.

- **Standard Plan (Pay-as-you-go):** $10 per hour for transcription.

- **Premium Plan:** Starts at $5 per month (plus per-hour rates) for access to more features and collaboration tools.

- **Enterprise Plan:** Custom pricing for advanced needs.

The main limitation is its pricing structure; the combination of a subscription fee plus per-hour charges can be more complex than a simple flat-rate plan. Some features, like automated translation, come with additional costs, which can increase the total expense for users needing the full suite of tools.

**Website:** [https://sonix.ai](https://sonix.ai)

## 7. Happy Scribe

Happy Scribe stands out as a versatile audio to text converter by offering both AI-powered and human-powered services under one roof. This hybrid approach makes it ideal for users who need the speed of automation for some projects but demand near-perfect accuracy for others. It caters to a global audience with extensive language support for transcription, subtitling, and translation, serving creators, universities, and multilingual teams.

The platform is built for professional workflows, allowing users to create style guides and glossaries to ensure brand consistency and correct terminology across all transcripts. Its collaborative editor lets teams work together on perfecting documents, while numerous integrations with tools like YouTube, Zoom, and Google Drive make uploading and managing files simple. This flexibility between speed, accuracy, and collaboration solidifies its position as a go-to solution for high-stakes projects.

### Key Features & Use Case

Happy Scribe is best for professionals and creators who need a flexible workflow, balancing the speed of AI with the option for human-verified accuracy, especially for multilingual content.

- **Best For:** Podcasters, video creators, journalists, and researchers needing accurate transcripts and subtitles in multiple languages.

- **Hybrid Service Model:** Choose between fast, affordable AI transcription or a human-made service for up to 99% accuracy.

- **Extensive Language Support:** AI transcription is available in over 70 languages, with broad support for subtitles and translations.

- **Advanced Subtitle Editor:** Provides powerful tools to edit, format, and export subtitles in various formats (SRT, VTT, etc.).

- **Collaboration Tools:** Features like style guides, glossaries, and a shared workspace are excellent for teams.

### Pricing & Limitations

Happy Scribe offers a tiered subscription model for its AI services and a per-minute rate for human services.

- **Free Trial:** Includes a few minutes to test the platform.

- **Basic Plan:** Starts at $10/month (billed annually) for 120 minutes of AI transcription.

- **Pro Plan:** $17/month (billed annually) for 300 minutes and more features.

- **Human-Made Service:** Starts at $1.75 per minute, with prices varying by language and turnaround time.

The main limitation is that the human service can become expensive, particularly for large volumes of audio or less common languages. Additionally, some key features and the removal of watermarks are only available on higher-tiered subscription plans.

**Website:** https://www.happyscribe.com

## 8. Deepgram

Deepgram is a developer-focused audio to text converter that provides a powerful speech-to-text API for teams building voice-enabled applications. Unlike platforms with user-facing editors, Deepgram delivers the raw engine for developers to integrate transcription directly into their own products, such as voice assistants, analytics tools, or media workflows. It offers a choice between different AI models, allowing users to balance the need for speed against the demand for accuracy, depending on the specific application.

The platform is designed for customization and scale, providing robust documentation for developers to get started quickly. Its strengths lie in its low-latency real-time streaming and ability to handle high volumes of pre-recorded audio. This makes it ideal for building features that require instant transcription, like live call analysis or in-app voice commands. For those curious about the technology, learning more about how Automatic Speech Recognition (ASR) works can provide helpful context.

### Key Features & Use Case

Deepgram is best suited for product teams and developers who need to integrate a fast, reliable, and scalable transcription engine into their software. It is not an out-of-the-box tool for end-users.

- **Best For:** Developers building voice agents, companies analyzing call center data, and media platforms that need to process audio at scale.

- **Multiple AI Models:** Choose between models optimized for speed (Nova-2) or accuracy to fit specific needs like real-time conversation versus archival transcription.

- **Real-Time Streaming:** Provides extremely low-latency transcription for live audio feeds, essential for interactive voice applications.

- **Advanced Features:** Offers add-ons like speaker diarization, profanity filtering, redaction, and topic detection through its API.

- **Language Support:** Supports transcription in over 30 languages and dialects.

### Pricing & Limitations

Deepgram’s pricing is transparent and usage-based, making it easy to scale costs with usage.

- **Free Plan:** A generous free tier offers $200 in credits to start building and testing the API.

- **Pay-As-You-Go:** After using the free credits, pricing is calculated per minute of audio processed, with different rates for pre-recorded and streaming audio.

- **Enterprise:** Custom plans are available for high-volume users requiring dedicated support and features.

The main limitation is that Deepgram is not a standalone application; it requires technical knowledge to implement. It’s a tool for building, not a ready-made solution for an individual looking to transcribe a few files without coding.

**Website:** [https://deepgram.com](https://deepgram.com)

## 9. AssemblyAI

AssemblyAI is not a typical end-user application but a powerful API designed for developers and businesses that need to build audio intelligence features into their own products. It operates as an engine under the hood, providing a feature-rich speech-to-text service that goes far beyond basic transcription. For teams building media pipelines, analytics tools, or advanced meeting assistants, AssemblyAI offers a robust toolkit for extracting deeper meaning from audio data.

The platform's strength lies in its "Audio Intelligence" models, which can automatically summarize content, detect topics, identify important entities, and even analyze sentiment. This makes it a great audio to text converter for developers who need to create searchable, analyzable, and actionable content from raw audio streams or files. Rather than just returning a wall of text, the API provides structured data that can power complex applications.

### Key Features & Use Case

AssemblyAI is built for technical teams that need a scalable, API-first transcription and audio analysis solution. Its value comes from the ability to automate post-transcription workflows.

- **Best For:** Developers, product teams, and businesses building applications that require transcription plus deeper audio insights like summarization and topic detection.

- **Audio Intelligence:** Offers a suite of models for summarization, sentiment analysis, topic detection, and identifying key phrases or entities.

- **Developer-Focused:** Provides a well-documented API with both streaming and batch endpoints, making it flexible for various applications.

- **High Accuracy:** Features universal and LLM-enhanced models designed for high accuracy across different audio qualities and accents.

- **Compliance Options:** Supports HIPAA and offers EU data residency options, catering to businesses with strict compliance requirements.

### Pricing & Limitations

AssemblyAI uses a pay-as-you-go model that varies based on the models and features used.

- **Free Tier:** A generous free tier is available for developers to test and build with the API.

- **Paid Usage:** Pricing is usage-based and can become complex depending on which models (Core, Audio Intelligence, etc.) are implemented.

The main limitation is its target audience. It is not a tool for casual users seeking a simple interface to upload a file. It requires technical knowledge to implement and is best suited for integration into larger software projects.

**Website:** [https://www.assemblyai.com](https://www.assemblyai.com)

## 10. Google Cloud Speech-to-Text (V2)

Google Cloud’s Speech-to-Text V2 is not a user-facing application but a powerful, developer-focused API for integrating high-quality transcription into other products. Built on Google’s advanced Chirp AI models, it offers excellent multilingual accuracy for both real-time streaming and batch processing of pre-recorded audio files. This makes it a go-to solution for engineering teams building features that need a reliable audio to text converter at their core.

Unlike consumer-grade tools, its strength lies in its scalability, deep integration with the Google Cloud Platform (GCP), and enterprise-grade security. Developers can connect it to services like Cloud Storage for audio files and BigQuery for data analysis, creating robust, automated transcription workflows. It's designed for technical users who require programmatic access to transcription and are comfortable working with APIs rather than a graphical interface.

### Key Features & Use Case

This service is ideal for developers and businesses that need to embed transcription capabilities directly into their own applications and systems at a massive scale.

- **Best For:** Engineering teams, enterprise applications, and companies needing a scalable, API-driven transcription engine with broad language support.

- **High-Quality AI Models:** Uses Google’s Chirp models for improved accuracy across 80+ language variants and dialects.

- **Streaming & Batch Modes:** Supports both live, real-time transcription and processing of large volumes of stored audio files.

- **Deep GCP Integration:** Natively connects with Cloud Storage, Pub/Sub, and other Google Cloud services for building end-to-end data pipelines.

- **Enterprise-Ready:** Includes features like data residency controls, customer-managed encryption keys (CMEK), and detailed audit logging for compliance.

### Pricing & Limitations

Google Cloud offers a pay-as-you-go model based on the volume of audio processed, with a free tier to get started.

- **Free Tier:** Includes 60 minutes of free audio processing per month.

- **Pay-As-You-Go:** V2 pricing starts around $0.016 per minute for batch processing, with prices varying based on features and volume.

- **Multi-Channel Billing:** Be aware that audio with multiple channels is billed for each channel separately, which can increase costs significantly.

The main limitation is its complete lack of an end-user interface or editor. It requires developer expertise to set up and is not a standalone tool for individuals looking to quickly transcribe a file.

**Website:** [https://cloud.google.com/speech-to-text](https://cloud.google.com/speech-to-text)

## 11. Amazon Transcribe

Amazon Transcribe is an automatic speech recognition (ASR) service from Amazon Web Services (AWS) designed for developers. Rather than being a user-facing application, it provides the powerful engine that developers can build into their own software for both batch and real-time transcription. This makes it an ideal audio to text converter for companies with existing AWS infrastructure looking to add transcription capabilities to their products, especially in contact centers or media workflows.

The service stands out with its deep integration into the AWS ecosystem and its specialized features for business analytics. It can automatically redact personally identifiable information (PII) from transcripts, identify different audio channels (like in a two-person call), and works with Contact Lens to provide in-depth analytics for customer service calls. It’s a foundational tool for building custom transcription solutions rather than an out-of-the-box editor.

### Key Features & Use Case

Amazon Transcribe is built for developers and businesses that need to integrate a powerful transcription engine into their existing applications and workflows, particularly within an AWS environment.

- **Best For:** Developers building applications, businesses with high-volume call centers, and media companies managing large-scale content pipelines.

- **Batch & Streaming:** Supports both pre-recorded audio files (batch) and live audio feeds (streaming).

- **Contact Lens Analytics:** Provides advanced analytics for contact centers, including sentiment analysis and call summarization.

- **PII Redaction:** Automatically identifies and removes sensitive personal data from transcripts to help with compliance.

- **Custom Models:** Allows you to train the engine with your own data to recognize specific terminology like product names or industry jargon.

### Pricing & Limitations

Amazon Transcribe uses a pay-as-you-go pricing model that can be complex, as rates vary by region and feature usage.

- **Free Tier:** A generous free tier is available for new AWS customers, typically including 60 minutes per month for the first 12 months.

- **Standard Pricing:** Billed per second of audio processed. Rates differ for standard transcription, medical transcription, and call analytics.

- **Pay-As-You-Go:** You only pay for what you use, which is cost-effective for variable workloads but can be hard to predict.

The main limitation is its developer-first approach. It’s an API, not a user-friendly application with a text editor. This makes it unsuitable for individuals who just want to upload a file and get a quick transcript without any coding.

**Website:** [https://aws.amazon.com/transcribe](https://aws.amazon.com/transcribe)

## 12. Microsoft Azure Speech to Text

Microsoft Azure's Speech to Text service is an enterprise-grade solution designed for developers and businesses needing to integrate powerful transcription capabilities into their own applications and workflows. Rather than a standalone editor, it provides a robust set of APIs and SDKs that can handle everything from real-time streaming transcription to processing large batches of audio files. This makes it a powerful audio to text converter for organizations already invested in the Microsoft ecosystem.

The service stands out for its deployment flexibility. It supports containerized deployment, allowing businesses to run the transcription models on their own infrastructure for enhanced data privacy and control. It also offers advanced features like language identification, custom model training, and detailed pronunciation assessments, which are critical for specialized use cases in education, call centers, and content moderation.

### Key Features & Use Case

Azure Speech to Text is ideal for organizations that require a customizable, scalable, and secure transcription engine to build upon, rather than a simple out-of-the-box tool.

- **Best For:** Developers, large enterprises, and businesses with specific compliance or data residency needs.

- **Multiple Processing Modes:** Offers real-time, fast, and batch transcription to suit different application requirements.

- **SDKs and REST API:** Provides extensive support for various programming languages, enabling deep integration.

- **Enterprise Deployment:** Supports containerization for on-premises deployment and integration with other Azure Cognitive Services.

- **Advanced Add-ons:** Includes speaker diarization, language identification, and pronunciation assessment for specialized analysis.

### Pricing & Limitations

Azure uses a pay-as-you-go model that is highly scalable but can be complex for newcomers.

- **Free Tier:** Offers a limited amount of free service hours per month for experimentation.

- **Pay-As-You-Go:** Billed per audio hour, with prices varying based on the model (standard, custom) and region. Standard transcription typically starts around $1 per hour.

- **Commitment Tiers:** Discounted rates are available for high-volume usage.

The main limitation is its developer-centric nature. It lacks a consumer-friendly interface for direct file uploads and editing, requiring technical expertise to implement. Pricing can also be confusing, as rates vary by region and API version.

**Website:** [https://azure.microsoft.com/en-us/pricing/details/cognitive-services/speech-services/](https://azure.microsoft.com/en-us/pricing/details/cognitive-services/speech-services/)

## Top 12 Audio-to-Text Converters Comparison

| Product | Core features | Quality & UX | Value / Unique selling points | Target audience | Pricing model |

|